Long Short Term Memory (LSTM) Networks in a nutshell

To understand Long Short Term Memory (LSTM), it is needed to understand Recurrent Neural Network (RNN) which is a special kind of RNN’s.

RNN is a type of Neural Network (NN) where the output from previous step are fed as input to the current step. In other words, RNN is a generalization of feed-forward neural network that has an internal memory. RNNs are designed to recognize a data’s sequential characteristics and use patterns to predict the next likely scenario.

First of all, we need to understand what is neural networks to understand the details of RNN.

Neural Networks

Neural networks are set of algorithms inspired by the functioning of human brain. They takes a large set of data, process the data and outputs what it is.

Neural networks sometimes called as Artificial Neural Networks(ANN’s), because they are not natural like neurons in your brain. They artificially mimic the nature and functioning of neural network. An ANN is used for the specific applications such as pattern recognition, data classification and so on.

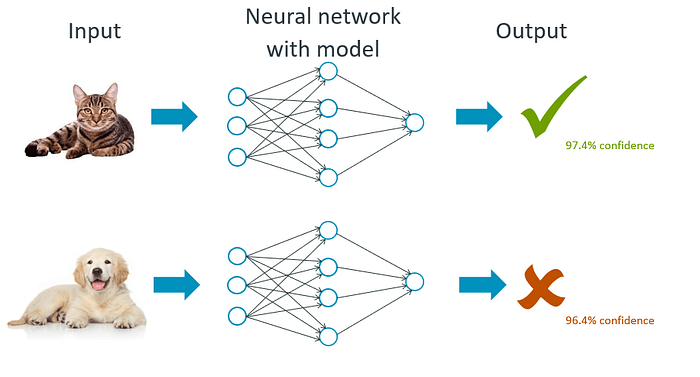

A trained feed forward neural network can be used to predict the output, for example it can predict that the image is cat or dog as given the figure below.

In this training process, the classification process of the second image is independent from the classification process of the first image.

In the cases is given above, the output of cat does not relate to the output dog. There are several cases where the previous understanding of data is important such as: language translation, music generation, handwriting recognition and so on. These networks do not have memory in order to understand sequential data like language translation.

Then, how can we solve this challenge of understanding previous output? The answer is RNN’s.

Recurrent Neural Network (RNN)

It is assumed that all inputs and outputs are independent of each other in a neural network. However, it is not suitable for many cases such as predicting the next word in a sentence (it would be so much better to know which words came before it).

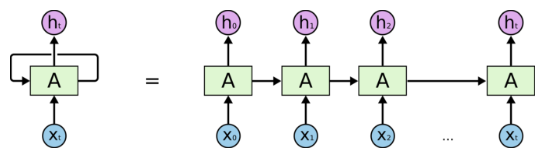

RNN is to make use of sequential information, they are called recurrent because they perform the same process for every element of a sequence, with the output being depended on the previous computations and it is already known that they have a “memory” which captures information about what has been calculated so far.

Unlike feed-forward neural networks, RNNs can use their internal state (memory) to process sequences of inputs

Long Short Term Memory:

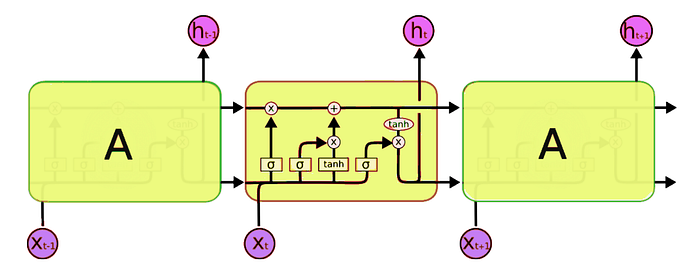

RNN’s have troubles about the short-term memory. If a sequence is long enough, they have a hard time carrying information from earlier time steps to later ones. So if you are trying to process a paragraph of text to do predictions, RNN’s may leave out important information from the beginning. Therefore, these causes the need of Long Short Term Memory (LSTM) which is a special kind of RNN’s, capable of learning long-term dependencies. LSTM’s have skills to remember the information for a long periods of time.

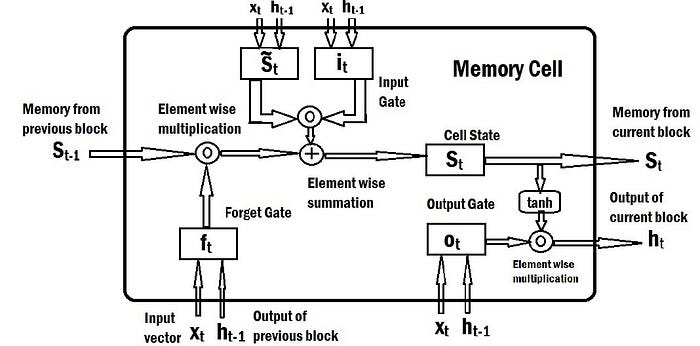

LSTM had a three step process which is given at the below figure that shows LSTM module has 3 gates named as Forget gate, Input gate, Output gate.

LSTMs’ core component is the memory cell. It can maintain its state over time, consisting of an explicit memory (also called as the cell state vector) and gating units. Gating units regulate the information flow into and out of the memory.

Cell State Vector

- Cell state vector represents the memory of the LSTM and it undergoes changes via forgetting of old memory (forget gate) and addition of new memory (input gate).

Gates

- Gate: Sigmoid neural network layer followed by point wise multiplication operator.

- Gates control the flow of information to/from the memory.

- Gates are controlled by a concatenation of the output from the previous time step and the current input and optionally the cell state vector.

Forget Gate

- Controls what information to throw away from memory.

- Decides how much of the past you should remember.

Update/Input Gate

- Controls what new information is added to cell state from current input.

- Decides how much of this unit is added to the current state.

Output Gate

- Conditionally decides what to output from the memory.

- Decides which part of the current cell makes it to the output.

With the memory & gating mechanism’s it is a good choice for such sequences which have long term dependencies in it.

The Applications of LSTM’s:

- Handwriting recognition

- Music generation

- Language Translation

- Image captioning